Student Answer Forecasting

Transformer-driven prediction of students’ answer choices in a language-learning ITS

Beyond “Correct or Incorrect”: Forecasting Which Answer a Student Will Choose

Most student models in Intelligent Tutoring Systems (ITS) aim to predict whether a learner will answer a question correctly. This is useful, but it completely disregards why the student might be struggling. In a multiple-choice question (MCQ), the specific option a student selects often reveals a misconception, a confusable grammar rule, or a systematic misunderstanding.

This research project introduces student answer forecasting: instead of predicting correctness, we predict the likelihood of choosing each answer option. That shift unlocks two practical benefits:

- Richer diagnostics: recurring selection of the same distractor can signal a stable misconception.

- Modular answer choices: forecasting is performed per (question, option) pair, so educators can add/remove/rewrite options without needing to retrain a monolithic multi-class model.

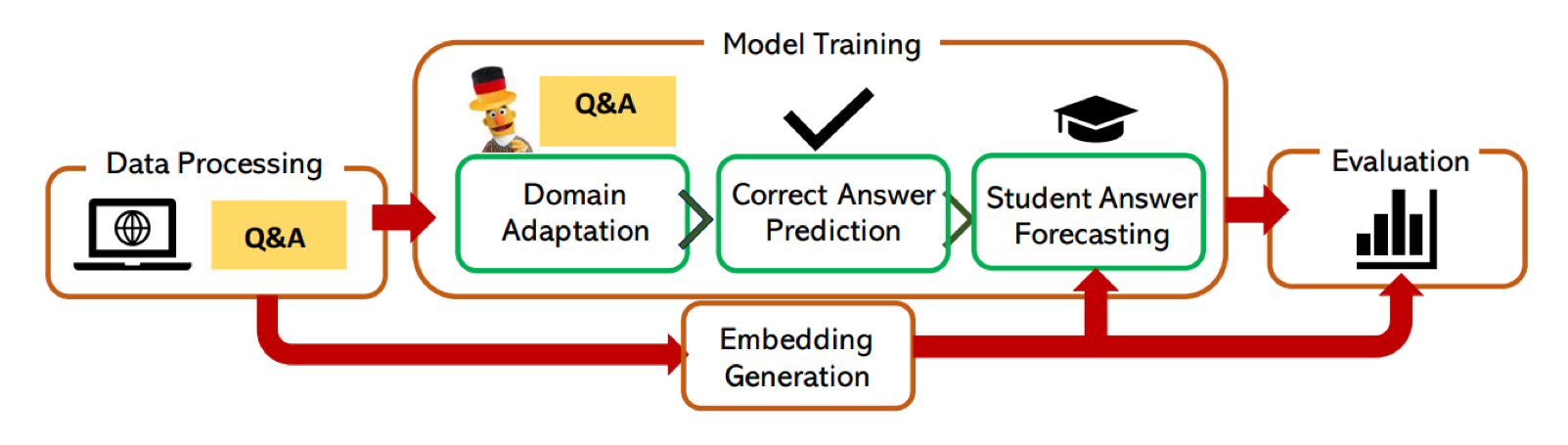

A Four-Stage Pipeline: From Raw ITS Logs to Answer-Choice Forecasting

At the core of the work is MCQStudentBert, a transformer-based pipeline that combines:

1) the text of questions and answer choices, and

2) a compact student embedding distilled from the student’s interaction history.

Stage 1 — Data processing (turning interactions into learning signals)

We start from raw ITS logs and reshape them into a format suitable for transformers and sequential models:

- Each student has a time-ordered history of MCQ interactions.

- Each MCQ is expanded into (question, candidate answer choice) instances.

- Labels indicate whether the student selected that choice (supporting multi-response settings when applicable).

This “expand-into-binary-instances” view is what later makes answer choices modular: each option becomes its own prediction target.

Stage 2 — Student embeddings (compressing history into a vector)

A central question is: what is the best way to represent a student’s past interactions?

We compared four families of embedding strategies:

-

MLP autoencoder (feature-based)

Uses engineered mastery-style features and learns a compressed representation. -

LSTM autoencoder (sequence-based)

Encodes temporal dynamics from ordered interaction sequences. -

LernnaviBERT (domain-adapted German BERT)

Fine-tunes a German BERT model on the ITS domain to better embed question–answer text. -

Mistral 7B Instruct (LLM-based embeddings)

Builds embeddings from recent interaction text with longer-context capacity; mean pooling over hidden states yields a compact student representation.

Why Pretrain on Correct Answers First?

Before forecasting students’ choices, we first train a model (MCQBert) to predict the correct answer(s) from text alone. This step matters because it separates two failure modes:

- The model doesn’t understand the MCQ content (poor “correct answer” competence), vs.

- The model understands the content, but needs student context to predict which distractor a given learner will pick.

Only after learning correct-answer structure, we inject student embeddings and fine-tune for answer-choice forecasting.

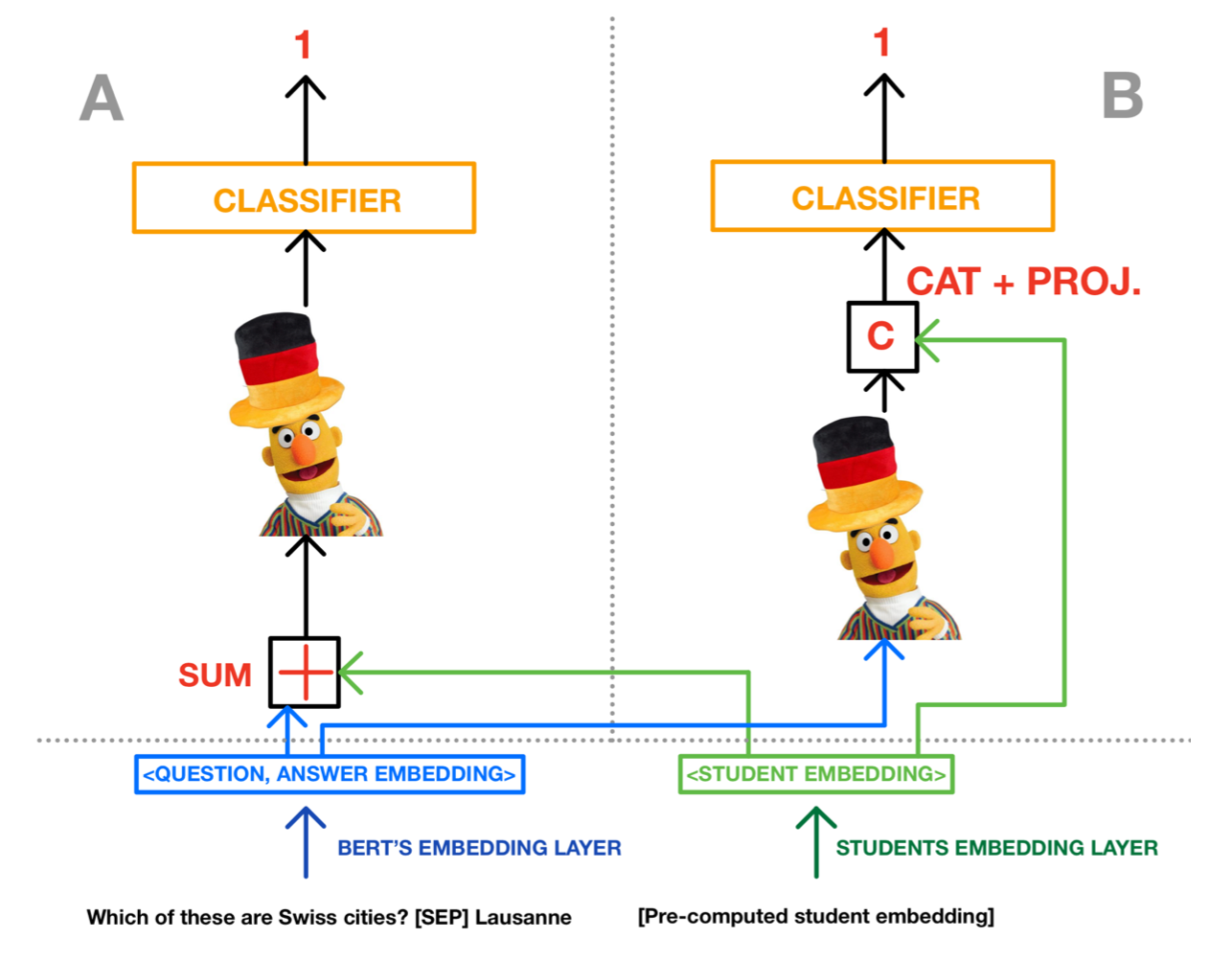

MCQStudentBert: Fusing Student History with Question Understanding

We explore two integration strategies that inject student context into a BERT-based answer predictor:

1) Concatenation at the decision stage (MCQStudentBertCat)

Student embedding and MCQ representation are kept distinct until the end:

- BERT encodes the (question, option) text into a [CLS] representation

- The student embedding is projected to the right dimensionality

- Concatenate → classify

This approach preserves the base language processing and lets the classifier “reason” jointly over both sources of information.

2) Addition at the input stage (MCQStudentBertSum)

Student embedding is projected and added into the input embedding space before transformer encoding:

- Student context acts like a “bias” shaping how the MCQ text is interpreted

- Useful as an early fusion baseline inspired by multimodal embedding fusion

Real-World Evaluation on a Large-Scale German ITS Dataset

We evaluate on language-learning MCQs from Lernnavi, a real-world ITS used at scale:

- 10,499 students

- 138,149 interactions (transactions)

- 237 unique MCQs

Beyond accuracy, we emphasize metrics robust to class imbalance:

- Matthews Correlation Coefficient (MCC)

- F1 score

- Accuracy

Results: Student Context Helps, and LLM Embeddings Work Best

Across embeddings and fusion strategies, injecting student context consistently improves answer forecasting over:

- A dummy majority baseline

- A text-only model that ignores student history.

A representative summary of our trained models:

| Embedding | |||||||

|---|---|---|---|---|---|---|---|

| Dummy | MCQBert | MLP | LSTM | LernnaviBERT | Mistral 7B | ||

| MCQStudentBertCat | MCC | 0 | 0.518 | 0.557 | 0.567 | 0.575 | 0.579 |

| F1 Score | 0.305 | 0.740 | 0.772 | 0.777 | 0.780 | 0.782 | |

| Accuracy | 0.590 | 0.771 | 0.785 | 0.790 | 0.795 | 0.797 | |

| MCQStudentBertSum | MCC | 0 | 0.518 | 0.552 | 0.564 | 0.568 | 0.569 |

| F1 Score | 0.305 | 0.740 | 0.767 | 0.774 | 0.777 | 0.778 | |

| Accuracy | 0.590 | 0.771 | 0.785 | 0.790 | 0.789 | 0.789 | |

Two practical takeaways:

- Concatenation is slightly more reliable than summation across embeddings.

- Mistral 7B embeddings perform best overall, suggesting that longer-context LLM representations capture meaningful structure in students’ interaction histories.

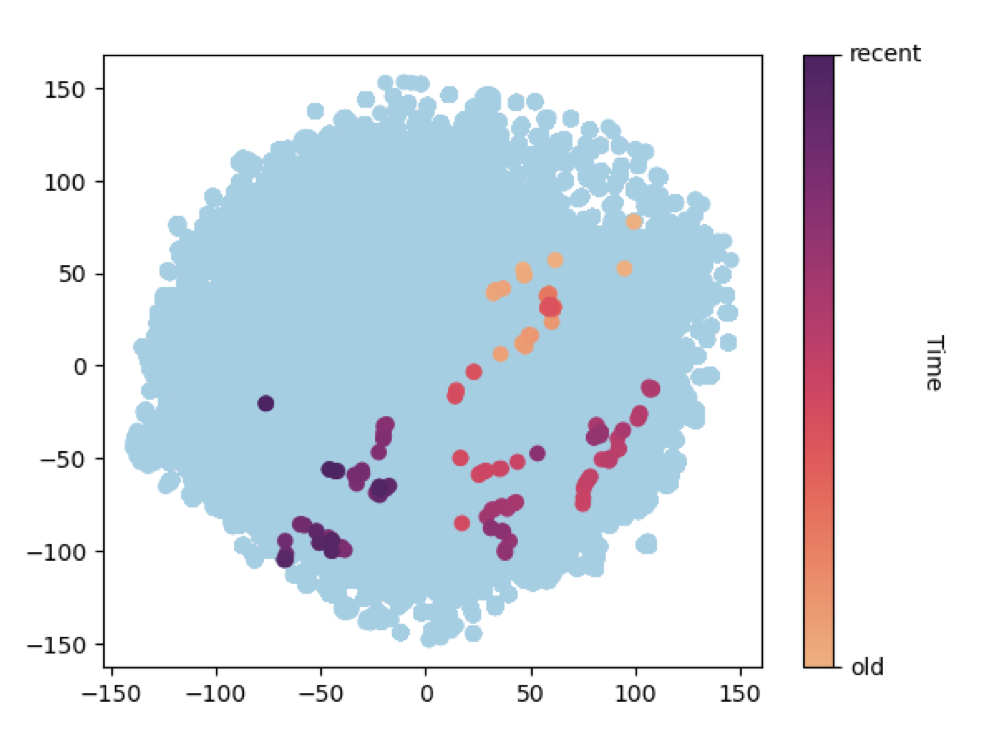

What the Embedding Space Reveals: Learning as a Trajectory

To make the student representations more interpretable, we visualize embeddings over time using t-SNE. One striking pattern is that a single student’s embedding often evolves smoothly as they answer more questions, suggesting that the representation captures a moving “state” rather than a static profile.

Why This Matters for Intelligent Tutoring Systems

Answer-choice forecasting enables interventions that correctness-only prediction can’t support:

- Misconception-aware hints: target the specific distractor a student is likely to pick.

- Dynamic distractors: personalize which options are shown to increase diagnostic value.

- Safe content updates: add a new option (e.g., a new misconception) without retraining a multi-class model.

- Granular analytics: track how distractor likelihood changes after an explanation or practice session.

Acknowledgements

Source of all images and table: “Student Answer Forecasting: Transformer-Driven Answer Choice Prediction for Language Learning”, E.G. Gado et al.